Futurists use super-forecasting and foresight modeling as our tools of trade. These methodologies combine trending data sets, with human behavioral models to anticipate system change and response. Thus, when we see certain emerging data points, we tend to be thinking many years out and extrapolating the cascade effects of that on our models. It is an 'occupational hazard' and naturally leads to perceiving the world in a very different way.

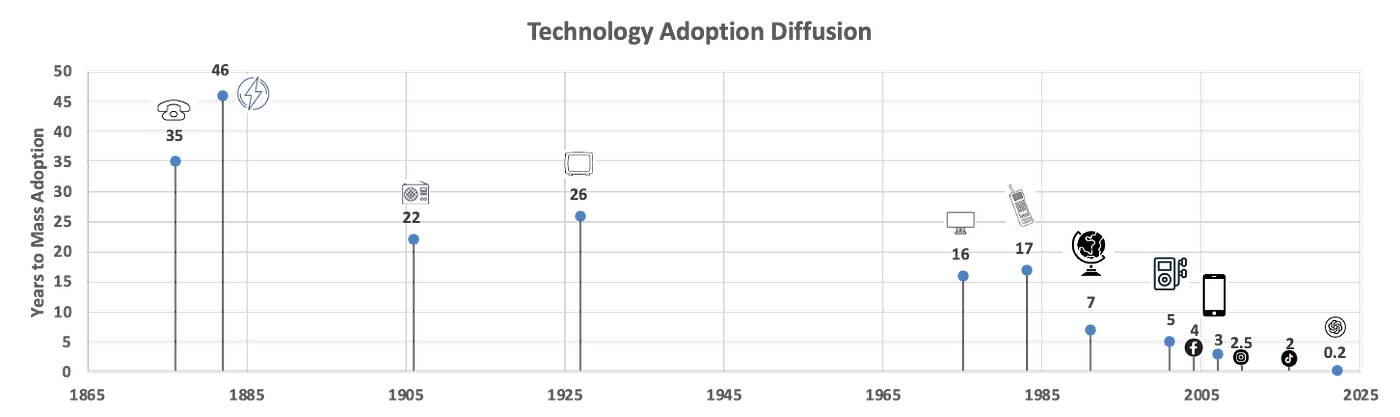

This core ability to extrapolate possible futures is one that increasingly is a challenge because most people perceive change as a linear shift, happening gradually over long periods of time. But accelerated technology adoption diffusion and increasingly rapid advances in science and tech are happening so much quicker than humans are typically used to, which is why we hear descriptions like hyper-scaling, exponentiality and the coming singularity as ways to describe our emergent world. It may be that the majority of humanity is not yet capable of grasping the idea that it is the rate of change itself that will be so fundamentally chaotic to our future.

It is not AI, climate change, birth rates, immigration, longevity, tokenization, or quantum computers (that unlock new scientific paradigms) that are the threat per se, it is that change may happen so quickly around these pillars that our economic, political, legal, ethical and religious systems simply will not be able to absorb that change in an ordered fashion. In other words, the change will still happen as fast as it can, but we won't adapt as quickly creating what Alvin Toffler so aptly described as 'future shock'.

The Turing Test Achieved

When Alan Turing wrote his foundational paper "Computing Machinery and Intelligence" in 1950, he probably hoped it would take less than 75 years for his 'imitation game' Turing Test to be successfully achieved. The acceleration of AI is now happening at a pace that even Turing would be astounded at.

"If a human evaluator cannot reliably distinguish a machine from a human in natural language conversation, the machine is said to have exhibited intelligent behavior indistinguishable from a human's." – Alan Turing

On March 31 of 2025, UC San Diego published a study to arXiv where researchers found that when taking part in a three-party Turing Test, GPT-4.5 could fool people into thinking they were interacting with another human 73% of the time. While not being definitive proof of passing the so-called "Turing Test", the results clearly fit the basic criteria of Turing's yardstick.

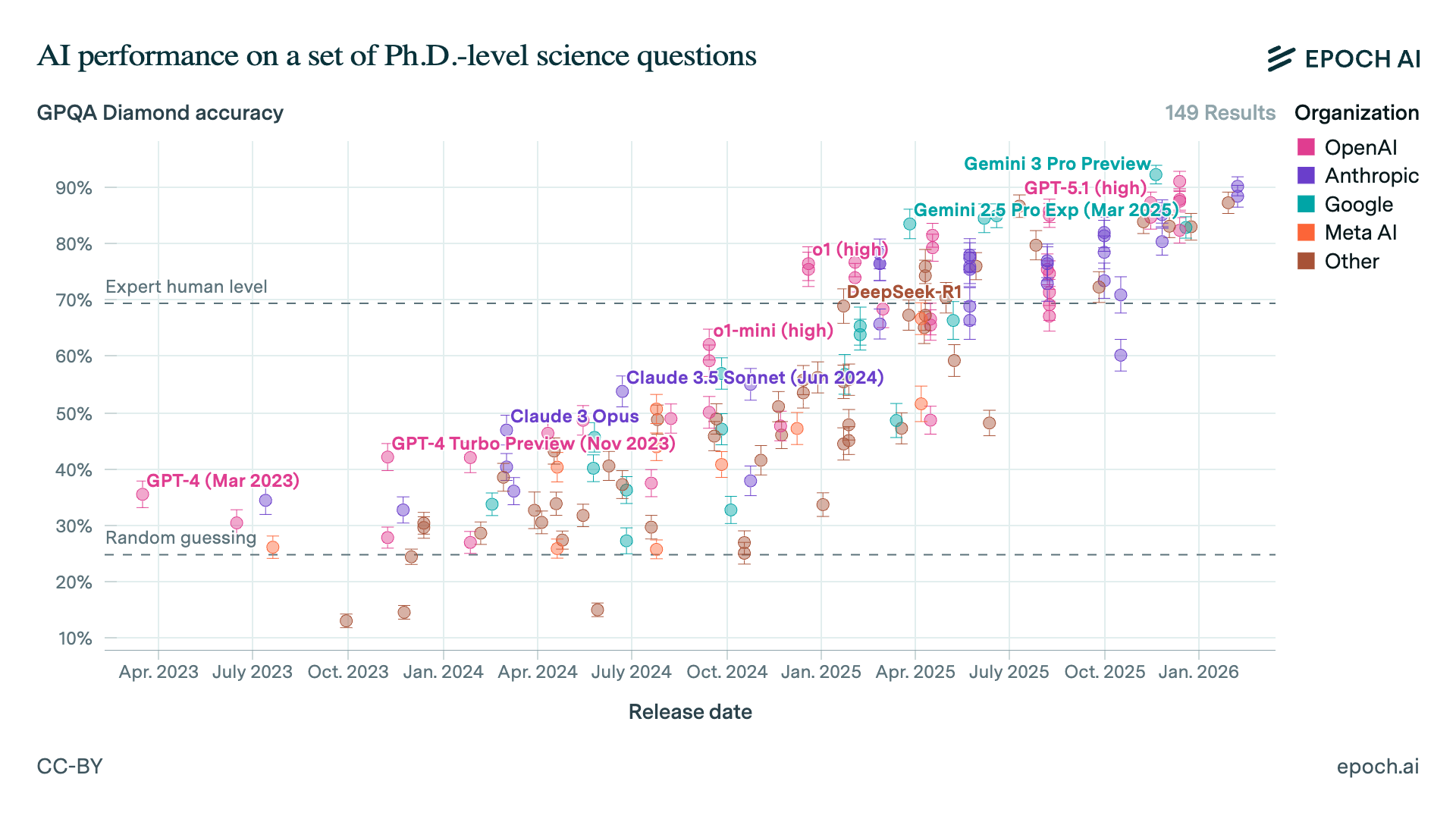

The GPQA Benchmark

A more rigorous academic approach to the capabilities of Large Language Models (LLMs) is what is known as the GPQA (Graduate-Level Google-Proof Q&A) Benchmark. In this benchmark test designed for AI models, 448 extremely difficult, expert-written multiple-choice questions in biology, physics and chemistry are offered. The questions are designed to be "google-proof" in that they require deep domain expertise rather than just good search techniques. PhD level human experts who take the test are reported to achieve only 69.7% accuracy collectively.

The GPQA Diamond subset is a higher-quality, more challenging subset of the main GPQA dataset. It contains 198 questions, for which both domain expert annotators got the correct answers, but which most non-domain experts answered incorrectly.

In November of 2022 when the first LLMs were assessed on this benchmarking standard they performed about as well as the average human with around 10% scores on the GPQA Diamond test regime. By January of 2026 Gemini 3 Pro, GPT 5.2Pro, Claude Opus, Grok 4 and Kimi K2.5 were all testing regularly in the 90-percentile range, well above PhD domain experts.

While GPQA is not designed specifically for establishing human-level IQ scores, we can infer that these models have moved from an average IQ of about 90 to 130 human-equivalent levels in the space of 3 years. If LLMs and their ancestor models continue to evolve at the current rate by 2030 these models will reach essentially results that put them in the top 0.1% of the population academically.

AI Doesn't Need Consciousness to Be Disruptive

AI is getting smarter, faster and by the GPQA measure alone is collectively already smarter than all but the smartest humans in these benchmark academic domains. There continues to be a great deal of discussion about these Large Language Models (LLMs) and whether they have or will achieve true consciousness or simply reaching the nebulous goal of "AGI" (Artificial General Intelligence). But those 'sound bite' metrics are somewhat of a distraction, because LLMs simply don't need sentience or general intelligence to be massively disruptive to employment or across society generally.

It also needs to be said that most of the AI that will disrupt human labor isn't LLMs at all – it's just hard-core industrial and agentic AI being deployed in the basic machinery of the economy – factories, transportation, back-office processing and operations.

The Investment Momentum

Meanwhile global investment in AI has been steadily accelerating such that there's now too much momentum for AI simply to have some failure point that stops the experiment and means the status quo prevails. Global funding for Artificial Intelligence has experienced exponential growth over the past decade, increasing more than 10x since 2014.

Corporate AI investment reached a record $252.3 billion in 2024, with total private investment in the sector rising over 44% year-over-year. By 2025, AI investment continued to surge, with global venture funding to AI-related companies exceeding $200 billion, representing around 50% of all global venture funding. The most valuable private companies in the world all have AI at their core with SpaceX (+ XAI) now valued at $1.25 Trillion and OpenAI valued at around $750-800 billion.

While there's plenty of talk of an AI bubble, there's very little chance of AI investment tanking and AI imploding to a point where business as usual is feasible. AI is the biggest bet the collective market has made in a new technological revolution since the foundation of the world's first stock market, and at that level of investment one has to believe that it will reshape industry and commerce totally.

The Bank of the Future

The market has made its intent very clear – human-less corporations are just too much of a drug for the capitalist adherents. The world of tomorrow will run on autonomous technologies as much as markets will allow, because they are going to be massive generators of capital growth.

But autonomous, machine-to-machine economies won't run on banking core systems, batch-based payments systems and signatures on pieces of paper. We need AI-fit for purpose banking systems and those are just now emerging. The bank of the future is a set of AI-based technologies. That's the only way banking reasonably develops from here.

About Brett King

I advise organizations on navigating the future of banking, technology, and society—bringing real-world experience from building the first mobile neo-bank and advising governments on fintech policy.

View Full Profile →